I continue to be a lucky fellow! Day 2 of the conference started with a keynote from Dylan Wiliam, one of my education research/writer heroes. He used his keynote to highlight and expand on the themes he wrote about in Creating the Schools our Children Need

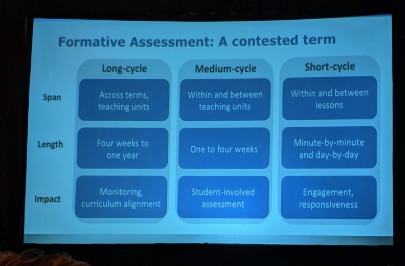

If you haven’t read it yet, get to it! One of my favorite education books. In the book and his keynote talk, Wiliam walked us through research about why many current large scale education reforms (like hiring “smarter” teachers, firing “bad” teachers, funding voucher/charter schools, copying education systems of other countries, and offering more differentiated/personalized instruction) aren’t effective. The rest of the book and talk was devoted to what might work: investing in current teachers, promoting knowledge-rich curricula, and implementing effective formative assessment processes. Wiliam acknowledges that this is tough work (it involves changing habits, which is always tough) and argues (with evidence at every point) that teachers need choice, flexibility, chances to take small steps, and supportive accountability as they figure out how to integrate “short cycle” formative assessment habits in their teaching. If you ever get a chance to hear Dylan Wiliam present, do it! The keynote was filled with data, analysis, and clear thinking.

The rest of the morning and part of the afternoon were devoted to breakout sessions. I got to hear Jay McTighe talk about designing performance assessment tasks. He includes multiple resources on his website and he’s worked with many (many!) districts. I liked hearing about his practical experiences and he provides multiple intriguing examples of performance assessments. One thought/concern: I didn’t hear much during the presentation about research on performance assessments as an intervention, and I’d like to hear more. I think some of the research about how effective different kinds of performance assessments are would help refine the idea quite a bit and help teachers/administrators figure out how to use the idea most effectively.

And a specific/related concern: there is a “debate” between folks who say they advocate “direct instruction” and “discovery/problem based/inquiry based/project based/etc.” learning. I know some teachers who identify as “project based” teachers. During his talk, McTighe talked about “authentic and inauthentic” tasks. I don’t think McTighe thinks about it this way, but I worry that some teachers/administrators see information about authentic tasks, performance, assessment, DoK level 3 and 4 tasks, etc. and they wrongly assume that these kinds of assessments and activities are “better” or more important than other kinds of activities and assessments. This is a generalization, but I think this “debate” is a false dichotomy: we shouldn’t be thinking about direct instruction or problem based learning, etc. Underneath it all, the reality of learning is that for every learning task, students need to be able to retrieve some skills and information from their long term memory and get it into their working memory in order to do the cognitive task we asked them to do. If those skills or information aren’t in their long term memory or they can’t retrieve them, then we need to help them with that, and that probably means some direct instruction. Despite the title, I think this article by Kirschner, Sweller, and Clark shows convincing evidence that we need to be thoughtful about what skills and knowledge students need before they try to tackle important “inquiry based” tasks.

Students absolutely need chances to use skills and knowledge in order to answer important questions, and problem based learning etc. activities can be great ways to do that. But it’s not an either or. It’s silly to talk about direct instruction or problem based learning being better or more important. I would like to hear J. McTighe talk about this underlying learning reality more.

Then I went to hear S. Brookhart talk about “Comprehensive and Balanced Assessment Systems,” a paper that was developed based on last year’s LDi Formative Assessment conference.

This paper is worth reading: Sue led us on a group discussion activity that challenged us to think comprehensively about our district’s assessment system. We examined how comprehensive (what is measures at what levels where) and balanced (what data are returned from what assessments appropriate for what purposes) our systems are and identify gaps. I think this activity would have been more effective if Sue would have been able to model the analysis/discussion on an example, but it was a good discussion.

Ready for day 3 tomorrow!